One thing that has become clearer to me is how quickly the discussion moves past capability.

It is relatively easy now to connect models to data and systems. MCP, agents, and similar approaches have made that part much more accessible. You can expose resources, define actions, and let the model move through them with very little friction.

That part is moving fast.

What seems less developed is what happens around it.

If you step back and look at it structurally, a model is still just a function that produces the next step given a context. The behaviour we interpret as “work” comes from placing that function inside a loop where it can read, transform, and affect state over time.

read → transform → propose → execute → new state

Once that loop is connected to real systems, the model becomes part of something that changes things, not just describes them.

And that is where the nature of the problem changes.

In simpler environments, this works surprisingly well. If the system is small, the data is consistent, and the consequences are limited, you can allow the model a fair amount of freedom. It can read broadly, combine information, and act, and the results are often useful.

There is very little resistance in the system.

In a company, that changes.

Not because the model behaves differently, but because the system it is interacting with is more complex.

Data is distributed across multiple systems. It has different owners. It is used for different purposes. Some of these boundaries are explicit, many are not, but they still exist.

What becomes noticeable quite quickly is that access, in the traditional sense, is not the main issue.

A model can often be given access to data without much difficulty.

The more subtle question is what happens when it starts to use that data.

A model that has access to multiple sources will tend to combine them. That is how it produces more coherent outputs. In many cases, that is exactly what you want.

But there are situations where the combination itself is sensitive, even if each individual source is not.

That is not something most systems are set up to express clearly today.

A similar thing happens with sequence.

In many processes, the order of steps is part of how the system is meant to work. Certain information is introduced later. Certain actions require a pause or a review. Different roles influence different parts of the flow.

A model operating freely does not naturally take that into account. It will use what is available when it improves the result.

From one perspective, that is efficient.

From another, it can bypass how the process is intended to function.

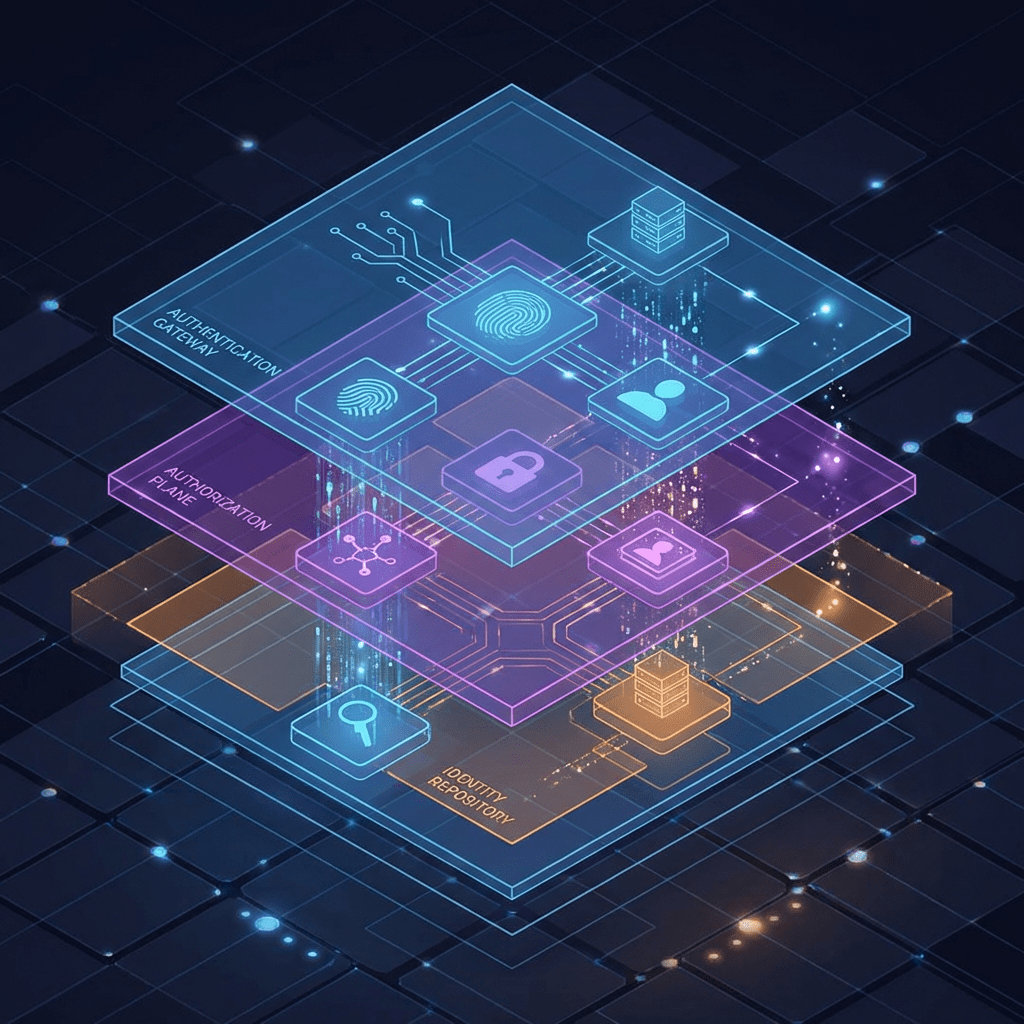

This is where I think the idea of permission frameworks becomes useful.

Not as a replacement for existing access control, but as an extension of it.

Something that can describe not only:

- who an agent is acting on behalf of

- what it is allowed to do

but also:

- when it is allowed to do it

- and how different pieces of data are allowed to be used together

If you look at how the ecosystem is evolving, it seems like different approaches are starting to form around this.

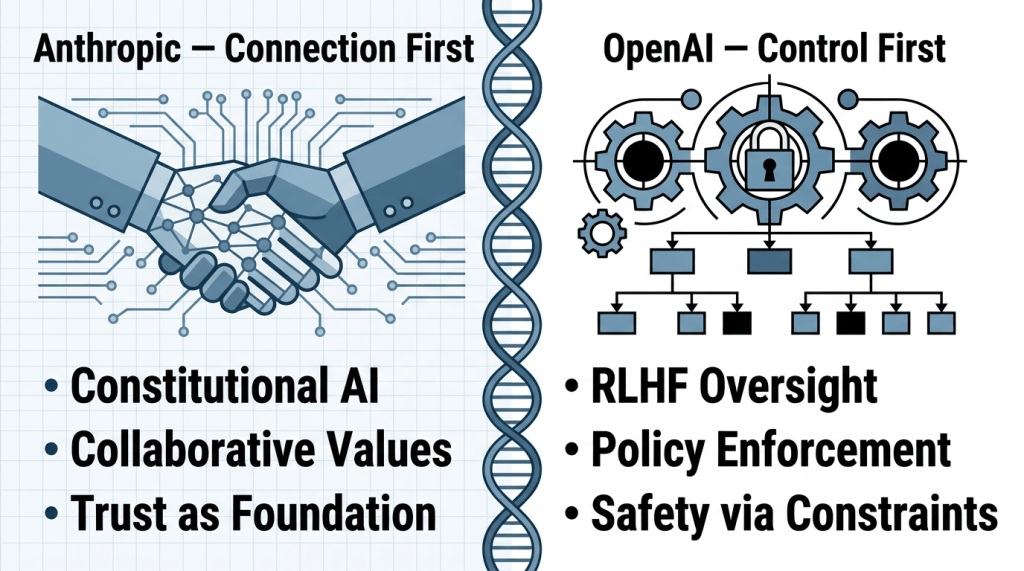

Anthropic has focused on making it easy to connect models to systems. MCP provides a clean way to expose resources and actions, and the model can begin to operate with very little setup. The control layer is there, but relatively light, and often sits close to the data.

OpenAI appears to be moving toward more explicit structure. Tools, workflows, and tracing make it easier to define what happens and in what order. The model becomes part of a flow that is already shaped by the surrounding system.

Looking beyond these, there are also signs that the market is starting to explore this more directly. Policy engines, identity-based execution, and governance layers are being adapted to agent behaviour, though mostly in pieces rather than as a single coherent solution.

It is still early, and none of these approaches feel complete on their own.

But taken together, they suggest that something is forming.

We already know how to define identity. We know how to control access. We know how to orchestrate workflows.

What seems less mature is the layer that brings these together for systems where behaviour is not fully predetermined.

If this holds, then the next phase of Enterprise AI may not be defined by better models.

It may be defined by how we describe and enforce what those models are allowed to do once they are connected to real systems.

Not just in terms of access, but over time, across systems, and within context.

That feels like a problem worth exploring further.

#EnterpriseAI #AIAgents #AIOrchestration #AIArchitecture #SystemsThinking #EnterpriseArchitecture #DataGovernance #AgenticAI

Lämna en kommentar